Big Data

Distributed machine learning on FRACTAL with Spark MLlib

Co-author: O. Akintola

This project designed and evaluated a distributed machine learning pipeline for land-cover classification using the FRACTAL dataset which combines LiDAR point clouds and multispectral imagery. The workflow used PySpark for distributed preprocessing and Spark MLlib for model training, with a focus on scalability and efficiency across different data volumes and cluster setups.

We implemented a Random Forest classifier and used stratified sampling to deal with class imbalance. Performance was tested on different dataset fractions and executors, using both AWS EMR and a local Spark cluster. The results showed sub-linear speedup as executors increased, and the pipeline was refined through feature engineering (e.g., NDVI, height normalization) and improved partitioning strategies for better distributed performance.

BigEarthNet distributed classification with deep learning using TensorFlow

Co-author: B. Peres

This project implements a distributed deep learning pipeline for land-cover classification on the BigEarthNet dataset, combining Sentinel-1 and Sentinel-2 data. We use Apache Spark for large-scale preprocessing, Petastorm for efficient Parquet-based data loading, and TensorFlow (with MirroredStrategy) for multi-GPU training.

The workflow constructs 5-channel tensors and trains a compact UNet-like CNN for pixel-wise classification. Experiments were conducted across varying data fractions and GPU counts to evaluate scalability and performance. Results show sub-linear speedup due to synchronization and I/O overheads, along with accuracy degradation at larger batch sizes.

Deep Learning for Computer Vision

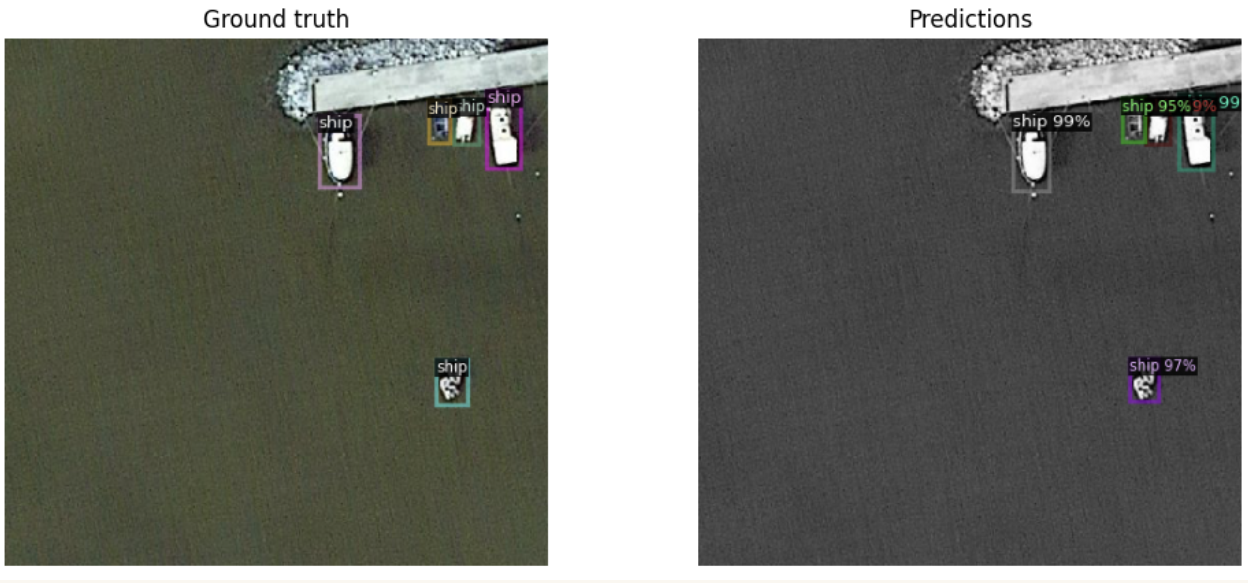

Ship detection (YOLO vs Faster R-CNN)

Co-author: A. Moreno

This project compared one-stage and two-stage object detection approaches for ship detection in satellite imagery, using a small Kaggle dataset (~600 images with PASCAL VOC annotations). We trained and evaluated YOLOv8 / YOLOv11 and a Faster R-CNN model (via Detectron2) under a consistent 80/20 split. YOLO models showed slightly higher AP and faster training due to efficient one-stage detection and larger batch sizes, while Faster R-CNN produced more precise bounding boxes but required smaller batches and longer training. Overall, results reflect the typical trade-off that is YOLO performed better in terms of speed and aggregate metrics, whereas Faster R-CNN achieved more accurate localisation, especially at stricter IoU thresholds.

Machine Learning

Multimodal crop disease classification (UAV Imagery)

Co-authors: S. Raj Sharma, S. Mohamed

This project was developed as part of the Beyond Visible Spectrum: AI for Agriculture 2026 competition, focusing on automated wheat disease classification using aligned RGB, multispectral (MS), and hyperspectral (HS) UAV imagery.

We explored multiple approaches, including classical machine learning on hand-crafted spectral and texture features, gradient boosting models, and deep learning-based late-fusion CNNs combining all modalities. Feature engineering included vegetation indices (NDVI, NDWI, SAVI), texture descriptors, and hyperspectral statistics, alongside careful preprocessing to handle noisy HS bands.

The final model was an improved late-fusion CNN with separate modality encoders, auxiliary classification heads, and focal loss to address class imbalance. We avoided PCA for hyperspectral data and instead used a learnable spectral projection combined with hand-crafted descriptors to preserve discriminative information.

Crop yield estimation

Co-authors: A. Moreno, E. Ogallo

In this project we applied machine learning to structured agricultural data to predict crop yield (tons per hectare). We worked with a large-scale synthetic dataset (1 million samples) containing environmental, soil, crop, and management variables.

We implemented a full ML pipeline in Python, including data cleaning, stratified train-test splitting, and feature engineering using standardization and one-hot encoding via scikit-learn. We then evaluated multiple regression models—ridge regression, random forest, and histogram-based gradient boosting—against a baseline dummy regressor. Model performance was assessed using R², RMSE, and MAE, with cross-validation and hyperparameter tuning to ensure robust generalization. All models achieved strong performance (R² ≈ 0.91), with minimal overfitting due to the size and balance of the dataset. Ridge regression performed best overall, offering comparable accuracy to ensemble methods while being computationally efficient and more interpretable. Here is the link to the GitHub repository: 🔗 GitHub